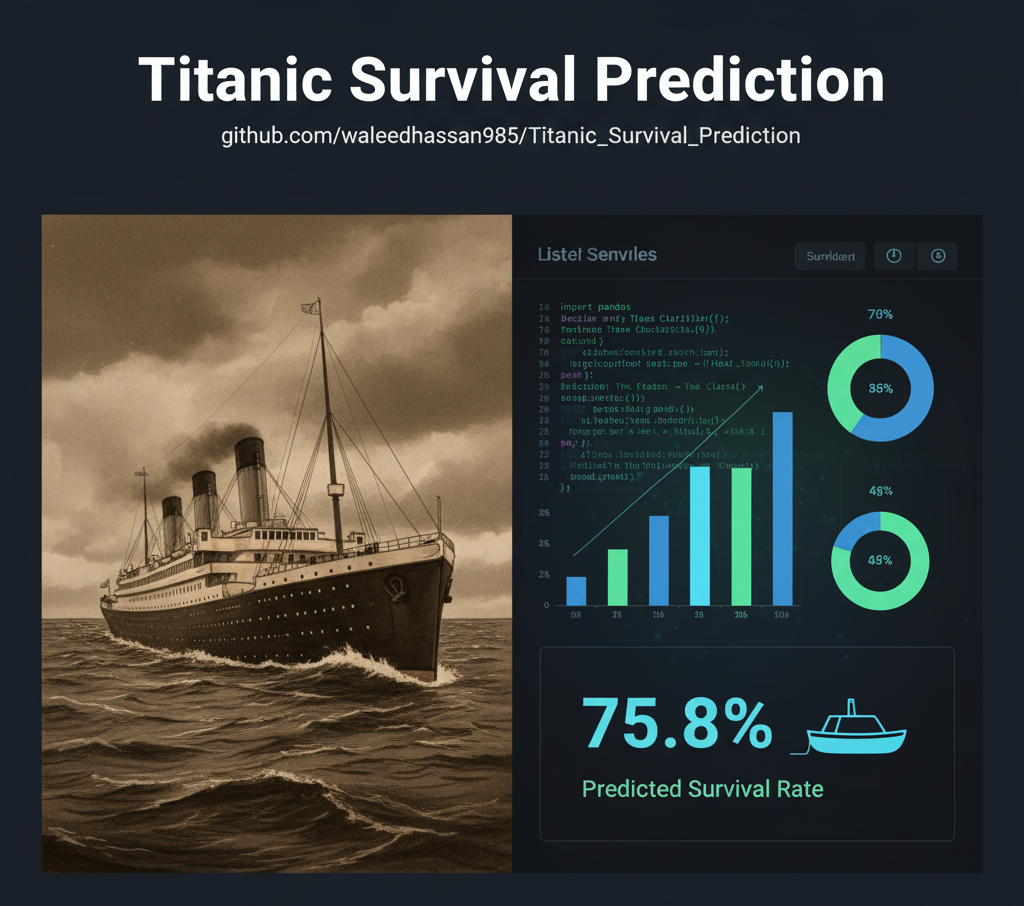

Titanic Survival Prediction Using Machine Learning

Comparative analysis of 5 ML algorithms achieving 76% accuracy with Naive Bayes classifier

Project Overview

This project analyzes the famous Titanic passenger dataset to predict survival outcomes using various machine learning classification algorithms. The analysis includes comprehensive exploratory data analysis, feature engineering, model training, and comparative evaluation of multiple ML techniques.

Challenge

The Titanic dataset presents a classic binary classification problem: predicting whether a passenger survived the disaster based on features like age, sex, ticket class, fare, and family relationships. The challenge involves handling missing data, engineering meaningful features, and selecting the optimal algorithm for maximum accuracy.

Technical Approach

Exploratory Data Analysis (EDA)

Analyzed survival patterns across different demographics and passenger classes. Visualized relationships between features like gender, age groups, ticket class, and survival rates to identify key predictive factors.

- Survival rate analysis by gender and class

- Age distribution and correlation studies

- Feature correlation heatmaps

- Missing data assessment and patterns

Port of Embarkation Distribution

Analysis revealed that most passengers embarked from Cherbourg (43.24%), followed by Queenstown (30.43%) and Southampton (26.32%). This feature showed correlation with survival rates due to socioeconomic differences between ports.

Survival Status Distribution

The dataset shows a class imbalance with 549 passengers who died (61.6%) versus 342 who survived (38.4%). This imbalance was considered during model training to prevent bias toward the majority class.

Data Preprocessing & Feature Engineering

Cleaned the dataset and engineered new features to improve model performance. Handled missing values using statistical imputation and created derived features from existing data.

- Missing value imputation for Age and Cabin

- Created family size feature (SibSp + Parch)

- Extracted titles from passenger names

- One-hot encoding for categorical variables

- Feature scaling and normalization

Model Training & Evaluation

Trained and evaluated five different machine learning algorithms on the preprocessed data. Used cross-validation and multiple metrics to assess model performance comprehensively.

- Naive Bayes: 76% accuracy (best performer)

- Logistic Regression: 75% accuracy

- Decision Tree: 74% accuracy

- Support Vector Machine: 66% accuracy

- K-Nearest Neighbors: 66% accuracy

Model Comparison & Selection

Compared all models based on accuracy, precision, recall, and F1-score. Selected Naive Bayes as the final model due to its superior performance and computational efficiency for this dataset.

- Performance benchmarking across all models

- Confusion matrix analysis

- ROC curve and AUC evaluation

- Cross-validation for generalization testing

Model Performance Comparison

| Model | Accuracy | Performance | Best Use Case |

|---|---|---|---|

| Naive Bayes | 76% | Probabilistic classification with independent features | |

| Logistic Regression | 75% | Linear decision boundaries, interpretable coefficients | |

| Decision Tree | 74% | Non-linear patterns, feature importance analysis | |

| Support Vector Machine | 66% | Complex decision boundaries, kernel methods | |

| K-Nearest Neighbors | 66% | Instance-based learning, similarity metrics |

🏆 Key Findings

- Naive Bayes emerged as the top performer with 76% accuracy, demonstrating the effectiveness of probabilistic approaches for this classification problem.

- Gender was the strongest predictor of survival, with female passengers having significantly higher survival rates.

- Passenger class (Pclass) strongly correlated with survival—1st class passengers had much better survival odds.

- Age groups showed distinct patterns: Children had higher survival rates, while middle-aged passengers had lower rates.

- Family size influenced outcomes: Passengers with small families (1-3 members) had better survival chances than solo travelers or large families.

Data Visualizations

The project includes comprehensive visualizations to understand survival patterns:

📊 Survival by Gender

Bar charts showing dramatic survival rate differences between male and female passengers

📈 Class Distribution

Visualization of survival rates across 1st, 2nd, and 3rd class passengers

🔥 Feature Correlation Heatmap

Correlation matrix showing relationships between all features and survival

📉 Age Distribution Analysis

Histograms and box plots analyzing survival patterns by age groups

🎯 Model Performance Metrics

Comparative charts showing accuracy, precision, recall, and F1-scores

📍 Confusion Matrices

Detailed confusion matrices for each model showing true/false positives and negatives

Technology Stack

Programming & Analysis

Machine Learning

Visualization

Data Processing

Lessons Learned & Insights

💡 Model Selection Matters

Different algorithms excel at different tasks. Naive Bayes' simplicity and assumption of feature independence worked surprisingly well for this dataset, outperforming more complex models like SVM and KNN.

🎯 Feature Engineering Impact

Creating derived features like family size and extracting titles from names significantly improved model accuracy. Good features matter more than complex algorithms.

📊 EDA is Critical

Thorough exploratory data analysis revealed crucial patterns (gender, class, age) that guided feature selection and model interpretation.

⚖️ Handling Missing Data

Strategic imputation of missing values (Age, Cabin) preserved data integrity while maximizing the training set size for better model performance.

Interested in Machine Learning Solutions?

Let's discuss how classification models and predictive analytics can solve your business challenges.