Enhanced Q&A Chatbot with Groq & LangChain

Fast AI-powered conversational assistant with multiple LLM options and real-time adjustable parameters

Project Overview

An enhanced Q&A chatbot built with Streamlit that leverages Groq's ultra-fast inference API and LangChain's powerful orchestration framework. The application provides an intuitive interface for users to interact with multiple state-of-the-art language models with adjustable parameters for customized responses.

Challenge

Traditional chatbot interfaces often lack flexibility in model selection and parameter tuning. Users need a simple yet powerful interface that allows them to experiment with different LLMs and adjust response characteristics (temperature, max tokens) in real-time without diving into code.

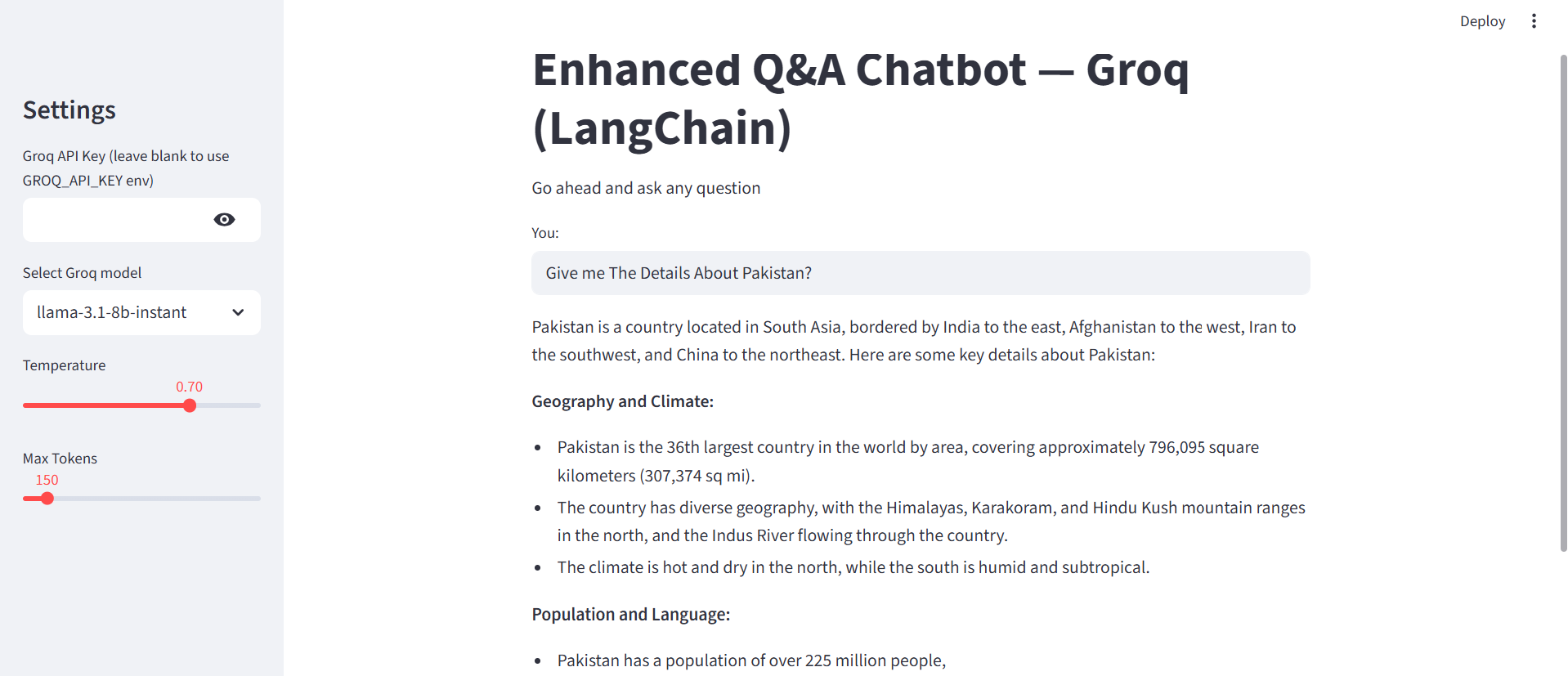

Live Streamlit interface showing the chatbot answering "Give me The Details About Pakistan?" with adjustable parameters (Temperature: 0.70, Max Tokens: 150) using Llama 3.1 8B Instant model via Groq API.

Technical Approach

Architecture & Framework Selection

Designed a modular architecture using LangChain for LLM orchestration and Streamlit for rapid prototyping of the user interface. Integrated Groq API for ultra-fast inference speeds.

- Streamlit: Interactive web interface with real-time updates

- LangChain: Prompt engineering and LLM chain orchestration

- Groq API: Sub-2-second inference for multiple open-source models

- LangSmith: Tracing and monitoring for production debugging

Prompt Engineering & Chain Design

Implemented a structured prompt template using LangChain's ChatPromptTemplate with system and human message roles for consistent behavior across different models.

from langchain_core.prompts import ChatPromptTemplate

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant. Please respond to the user queries."),

("human", "{question}"),

])

# Chain: Prompt → LLM → Parser

chain = prompt | llm | output_parser

answer = chain.invoke({"question": question})Multi-Model Integration

Integrated four different LLM models accessible through Groq's API, each with unique characteristics for different use cases.

- Llama 3.1 8B Instant: Fast, balanced performance for general queries

- GPT-OSS 120B: Larger model for complex reasoning tasks

- Qwen3 32B: Multilingual capabilities and instruction following

- Gemma2 9B-IT: Instruction-tuned for high accuracy

UI/UX & Parameter Controls

Built an intuitive Streamlit interface with sidebar controls for model selection and hyperparameter tuning. Implemented secure API key handling with environment variable fallback.

- Model selector dropdown with 4 options

- Temperature slider (0.0 - 1.0) for creativity control

- Max tokens slider (50 - 1024) for response length

- Secure API key input with password masking

- Real-time response generation with loading spinner

Technical Stack

Framework

- Streamlit 1.50.0

- Python 3.10+

LLM Orchestration

- LangChain 0.3.27

- LangChain-Groq 0.3.8

- LangSmith (tracing)

APIs & Services

- Groq API (inference)

- LangChain API

Environment

- python-dotenv

- Virtual environment

Key Features

🚀 Ultra-Fast Inference

Leverages Groq's optimized inference engine for sub-2-second response times, significantly faster than traditional cloud LLM APIs.

🎛️ Dynamic Parameter Tuning

Real-time adjustment of temperature and max tokens without restarting the application, allowing users to fine-tune response creativity and length on-the-fly.

🤖 Multi-Model Support

Switch between 4 different LLMs seamlessly, each optimized for different tasks: speed, reasoning, multilingual support, or instruction following.

🔍 LangSmith Tracing

Built-in observability with LangSmith for debugging chain execution, monitoring latency, and analyzing model performance in production.

🔐 Secure API Management

Supports both environment variable and UI-based API key input with secure password masking, enabling flexible deployment options.

💬 Clean Conversational UI

Intuitive Streamlit interface with clear input/output separation, loading indicators, and helpful prompts for better user experience.

Results & Impact

⚡ Performance

- Sub-2-second average response time

- Real-time parameter adjustment

- Zero cold-start delays with Groq

- Efficient token usage monitoring

🎯 Functionality

- 4 production-ready LLM models

- Accurate, contextual responses

- Customizable system prompts

- Extensible chain architecture

📈 Developer Experience

- Simple 3-file project structure

- Easy local deployment (one command)

- Clear separation of concerns

- Production-ready with tracing

Lessons Learned

Key Insights

- Groq's Speed Advantage: Groq's inference speed (sub-2s) is significantly faster than standard cloud APIs, making real-time chat experiences viable even with larger models.

- LangChain Abstraction: LangChain's chain abstraction simplifies swapping between different LLM providers without rewriting application logic.

- Parameter Sensitivity: Temperature and max tokens have dramatic effects on response quality—exposing these controls to users increases flexibility but requires good defaults.

- Observability Matters: LangSmith tracing is invaluable for debugging prompt issues and understanding model behavior in production.

- Streamlit Limitations: While great for prototyping, Streamlit's stateful session management can be tricky for complex conversation history—consider alternatives for production chat applications.

Interested in Building GenAI Applications?

I can help you build production-ready chatbots, RAG systems, and LLM-powered applications tailored to your business needs.